Copyright was built for humans. AI has changed the context

23

February

2026

4

min read

Australia’s AI copyright debate is revealing a deeper challenge: balancing innovation with fairness to original creators.

Introduction

Leading Artificial Intelligence (AI) companies are seeking amendments to Australia’s copyright framework, calling for a new text and data mining exception that would allow AI models to learn and train from copyrighted works.

At the centre of the debate are the rights of authors, artists and creators. Under Australia’s copyright laws, creators hold exclusive economic rights to copy, publish, communicate and publicly perform their works. These rights exist automatically and without registration.

The Australian Writers Guild has said the proposed exception would “retroactively legalise the theft of Australia’s creative workers’ intellectual property… by foreign multi-nationals”.

As governments and courts grapple with this issue, copyright is no longer just a technical or legal concern – it’s becoming more of a test of fairness, consent and trust.

Policy Landscape – What has been announced to date?

- In December 2023, former Attorney-General, the Hon. Mark Dreyfus MP announced the establishment of the Copyright and AI Reference Group (CAIRG).

- In November 2024, the Senate Select Committee on Adopting AI, recommended AI products be transparent on their use of copyright, and that the Government consider a mechanism to pay creators for “commercial AI-generated outputs based on copyrighted material used to train AI systems”.

- In August 2025, the Productivity Commission’s Interim Report on Harnessing Data and Digital Technology sought feedback on a text and data mining exception. The proposal drew significant opposition, with 728 post-interim submissions received. Peak creative industry bodies, including the Australian Recording Industry Association (ARIA), APRA AMCOS, the Australian Society of Authors, and the Copyright Agency argued that a broad exception would strip creators of bargaining power and preference multinational technology companies at the expense of local creative industries.

- In October 2025, Attorney-General the Hon. Michelle Rowland MP ruled out a broad text and data mining exception, stating “there are no plans to weaken copyright protections when it comes to AI”. The Government convened CAIRG to discuss three priority areas:

- Encourage fair, legal avenues for using copyright material in AI – examining whether a new paid collective licensing framework under the Copyright Act should be established, or whether to maintain a voluntary licensing framework.

- Improve certainty – exploring how copyright law applies to material generated through the use of AI.

- Avenues for less costly enforcement – including a potential new small claims forum for lower-value copyright infringement matters.

- In December 2025, the Productivity Commission’s Final Report on Harnessing Data and Digital Technology concluded it would be “premature to make changes to Australia’s copyright laws”. It recommended the Government monitor the development of AI and its interaction with copyright holders over the next three years, and only then consider establishing an independent review if key issues remain unresolved. The Commission recognised that licensing markets are already developing for many types of copyright material, and that licensing “creates more incentives for the production of new creative content, to the benefit of both the public and AI developers”.

Potential Impact

The companies which own these models, as well as The Technology Council of Australia, argue that without a text and data mining exception, Australia is an unattractive location for investment and risks missing the ‘AI boom’ of funding into data centres. However, AI investment continues to flow into countries where copyright implications are equally uncertain, suggesting this argument may be overstated.

How models are trained is just one aspect of the broader copyright debate. The outputs these models generate are also creating significant legal and commercial disputes.

In December 2025, Disney sent a cease-and-desist letter to Google, accusing it of copyright infringement on a “massive scale” through its AI tools. Google’s Gemini AI was found to generate images of Disney characters including Darth Vader, Princess Elsa and Deadpool as figurines – a viral trend that Google’s own CEO had promoted.

In the same week, Disney signed a $1 billion licensing deal with OpenAI, signalling the emerging commercial model: license with partners, enforce against others. Earlier in 2025, Disney and Universal filed a landmark lawsuit against AI image generator Midjourney – the first major action by Hollywood studios against an AI company.

In Australia, there have been reports on the widespread availability of AI-generated Aboriginal art prints for purchase, and, separately, there are AI-generated Aboriginal images available on websites which sell royalty-free stock images and videos.

So far, the Government far sided with creators, explicitly ruling out a broad text and data mining exception.

The response from Australia’s creative industries has been overwhelmingly supportive:

- ARIA’s CEO Annabelle Herd described the copyright system as “robust, fit for purpose, and should be allowed to do its job in protecting the value of Australian culture”.

- APRA AMCOS CEO Dean Ormston said the Productivity Commission had “recognised what the creative sector has argued for over two years – that licensing of creative copyright content provides the pathway for AI development while ensuring creators are fairly compensated”.

- News Corp Australia’s Michael Miller called the Government’s decision a “welcome catalyst for tech and AI companies to license Australian content”.

There remains uncertainty over what the policy solution is for this fast-moving challenge. Much will depend on the outcomes of CAIRG and the three-year monitoring period recommended by the Productivity Commission.

While the Commission concluded it is too soon for legislative change, it also flagged a deeper question: whether “copyright continues to be the appropriate vehicle to incentivise creation of new works and, if not, what alternatives could be pursued”.

This suggests the debate is far from settled.

Why trust is now the real issue

At its core, this debate rests on a simple principle: AI systems are not authors, and innovation should not be built on the unconsented extraction of human creativity.

Yet AI requires human creativity to learn. The policy challenge is finding the right balance between enabling AI innovation and investment and ensuring creators are fairly compensated.

The Government’s current direction – licensing frameworks over blanket exceptions – reflects a clear preference for that balance. But the rules are still being written.

For organisations, the risk has shifted. This is no longer just a copyright compliance question – it’s a trust and legitimacy question. The organisations that will be most exposed are not only AI developers, but any brand deploying generative AI in marketing, communications, customer service, design, recruitment or product development.

Key implications organisations need to consider:

- Reputation can move faster than regulation: Even if your AI use is technically lawful, stakeholders may still view it as unfair or extractive. The reputational test is increasingly about how content is sourced and who benefits, not just whether it meets a legal threshold.

- Creator backlash is a stakeholder issue, not a niche industry issue: If your organisation uses AI-generated outputs in public-facing work (for example campaigns, imagery, audio, video, communications materials), you may be drawn into disputes about attribution, cultural appropriation and remuneration.

- Policy volatility is now part of the operating environment: Copyright and AI settings are still in motion. Organisations should assume the rules, expectations and enforcement pathways will tighten over time. Yesterday’s acceptable practice may become tomorrow’s headline.

1

April

2026

The Prime Minister’s National Address

Read news article

1

April

2026

The Prime Minister’s National Address

Download White Paper

26

March

2026

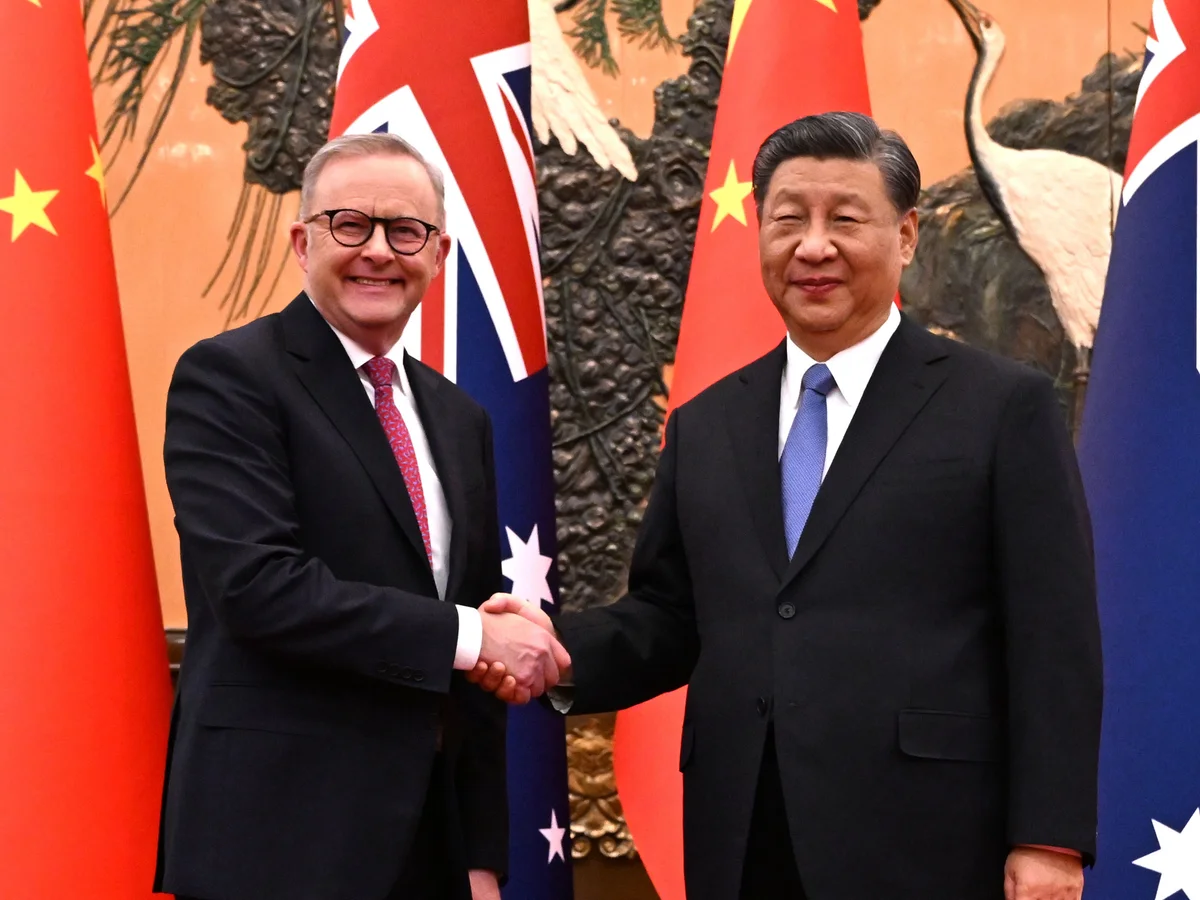

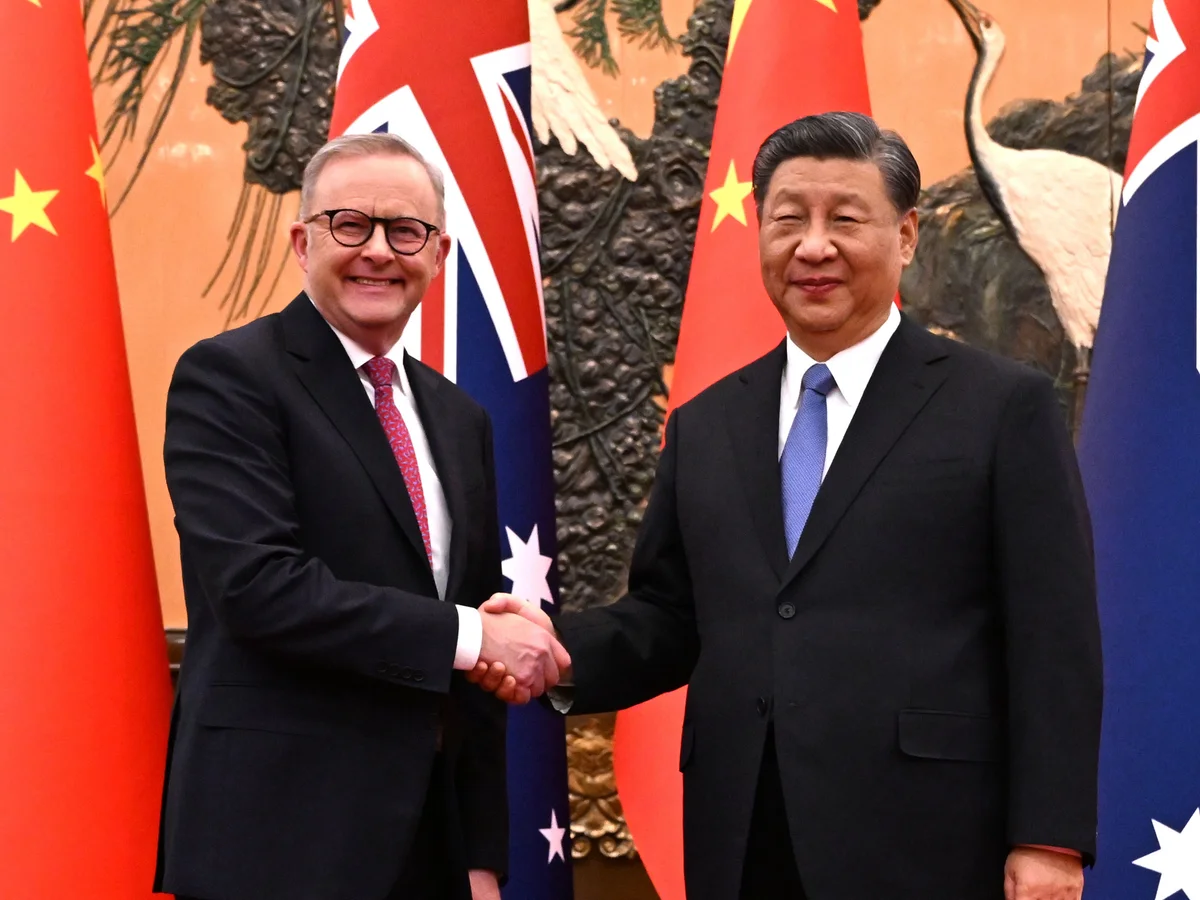

A Decade of the China Australia Free Trade Agreement: What Comes Next?

Read news article

26

March

2026

A Decade of the China Australia Free Trade Agreement: What Comes Next?

Download White Paper